I have always been opposed to the development and push for the use and integration of generative artificial intelligence in the workflow of any job or industry. I personally view the benefits A.I. could potentially bring are vastly outweighed by the environmental, intellectual, and privacy issues it has already caused through the push for mass public acceptance. I went into the talk by Lucas Wright from UBC posted this week to see if my perspective could be changed or influenced, as I am aware that there are some fringe positives to the tools being created, and I wanted to see if there were any others that I missed or any solutions to my concerns brought up earlier.

One part of Wright’s talk that resonated with me is how he says that some people might falsely “endow wisdom on these tools,” because of the supposed accuracy of the answers we get from chatbots or AI agents. Wright refutes this by showing how AI is, in reality, just doing highly advanced word predictions to create what its data says would be the most accurate response. However, a flaw in that system is that AI answers are scaled to the quality of the prompt and question asked of them. Wright himself tells a story about asking ChatGPT what the best place in Atlin, B.C., would be for paddleboarding. ChatGPT gave a response that didn’t take into account how dangerous the “best” route would be across the rough glacial lake, so Wright had to ask again, this time asking specifically for what the safest route from a specific starting location would be from the point of view and with the language of an experienced guide. Because Wright was already familiar with the location, he knew that the first answer was not what he was looking for and might cause him harm, so he knew to prompt again with more detail. However, this story raises one key concern I have about artificial intelligence. It is often advertised as being an all-in-one spot for knowledge, and that whatever it gives back is accurate and doesn’t require any analysis as to whether or not it’s correct.

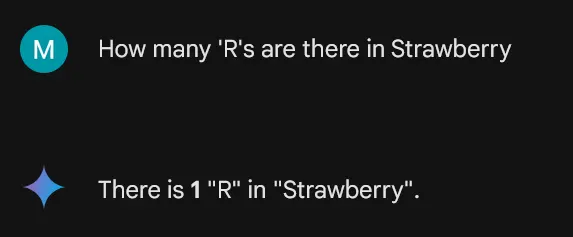

In this article, it explores why the viral meme of artificial intelligence struggling to spell words works in the way that it does. Essentially, artificial intelligence never sees the words or sentences themselves but a collection of tokens that it uses as representations of syllables, sentences, and everything in between. This means that the true quality of an answer lies in the quality of the tokens within in the tool. While this example is clearly humorous in nature and easy to disprove, Wright’s example would be harder to tell if it’s incorrect if the prompter has no experience with the nature of lakes in Altin. This is the danger of relying on AI in my eyes. If someone has no reason to question the output, there is a chance that they will listen to an incorrect answer without questioning it, leading to people paddleboarding into dangerous waters.

Refinement loops, as Wright calls them in his talk, are supposed to act as a deterrent to this possibility. By repeatedly prompting the artificial intelligence to expand upon individual aspects of its answer to improve the quality of the final output. In my eyes, if a tool requires you to repeatedly use it to get a singular output of quality, it should at the very least not be viewed as the high-quality and knowledgeable tool that artificial intelligence is often advertised to be. I personally compare it to how a hammer works, where it, too, needs multiple swings to get a nail all the way down into a piece of wood; however, hammers are not advertised as being able to get a nail down perfectly in one swing every time. This is the problem I have with artificial intelligence; it’s marketing prides itself on being one hundred percent accurate and correct every time, despite it requiring multiple prompts to be halfway usable on average.

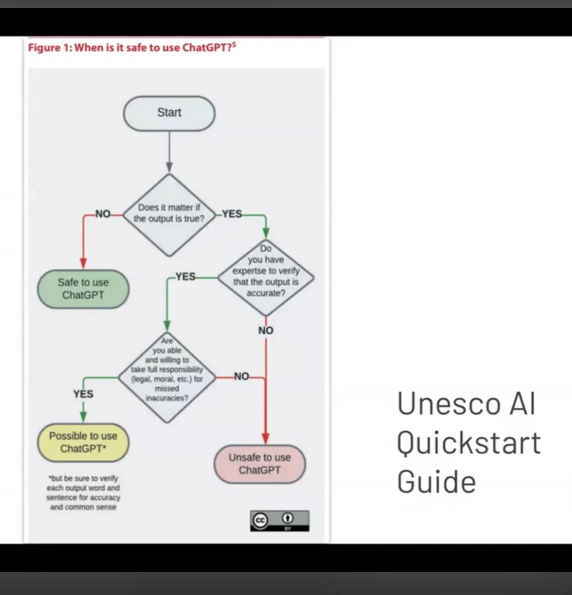

The UNESCO AI Quickstart flowchart Wright brought up made me realize what my belief about the future use of artificial intelligence should be. The flowchart seeks to help the reader understand how to determine wether their prompt is safe to do in the first place. If it doesn’t matter how accurate the output is, then using an AI to answer the prompt is safe. Otherwise, it is either unsafe to use or possible to use with heavy amounts of analysis of the output, depending on how critical of the answer and the ability to reflect upon it the prompter is able to be. To me, this flowchart leads me to think that the safest uses of artificial intelligence are in situations where the output wouldn’t be substantial anyway, regardless of whether or not the output was made by an AI. The more important the output would be, the less likely it is to be sure of the output being accurate and safe enough for use, thus calling the supposed quality of the tool into question. In other words, the more important the output of the AI is, the less it is seemingly recommended to use that AI to find the answer to a problem in the first place. If the output doesn’t harm the prompter itself, it could do damage to those who trust what the prompter shared with others.

The only solution I could think of to address these problems, however unlikely it might be, would be to privatize AI tools or create a license system to access them. If people used AI tools improperly or dangerously, they could have their licenses revoked and be cut off from access to those programs. This would reduce environmental impact and serve as a genuine punishment for those who use AI to create dangerous or misleading content. However, given the profit-driven nature of these companies, I know this solution will likely never be achieved, as these companies have proven time and again that they would ignore laws and social impact in pursuit of financial gain. They will continue to push their technology as an absolute necessity for everyone in society, despite the harm it would cause. This is why I’m so hardline against artificial intelligence, as due to being connected to for-profit companies, they are encouraged to expand and up-scale their output in order to increase revenue. It is far more likely that we will have these companies push and push the limits until they go too far, and we are unable to return to a world-state that came before the integration of these harmful tools.

While there are some positives and niche scenarios where artificial intelligence would be helpful and positive for people in society, I still believe that society as a whole and the world itself would be made overall worse with mass integration of a tool that essentially creates guesses made with stolen information to answer the questions of prompters who may not have the critical analysis skills to evaluate the validity of the output. We should either begin to roll back AI tools or license them out of the general public outright to minimize the danger and impact these tools have already done in order to try and save society and the environment.